Beyond Static UI: What “Lovable” Means in 2026

A lovable site is not only visually pleasing—it is legible, fast, trustworthy, and appropriately adaptive. In 2026, “AI-native” often means the experience can reshape copy, modules, and journeys based on declared intent and behavioral signals—while staying on-brand, accessible, and measurable. Get this wrong and you ship either a gimmick that slows the page or a personalization engine that creeps users out.

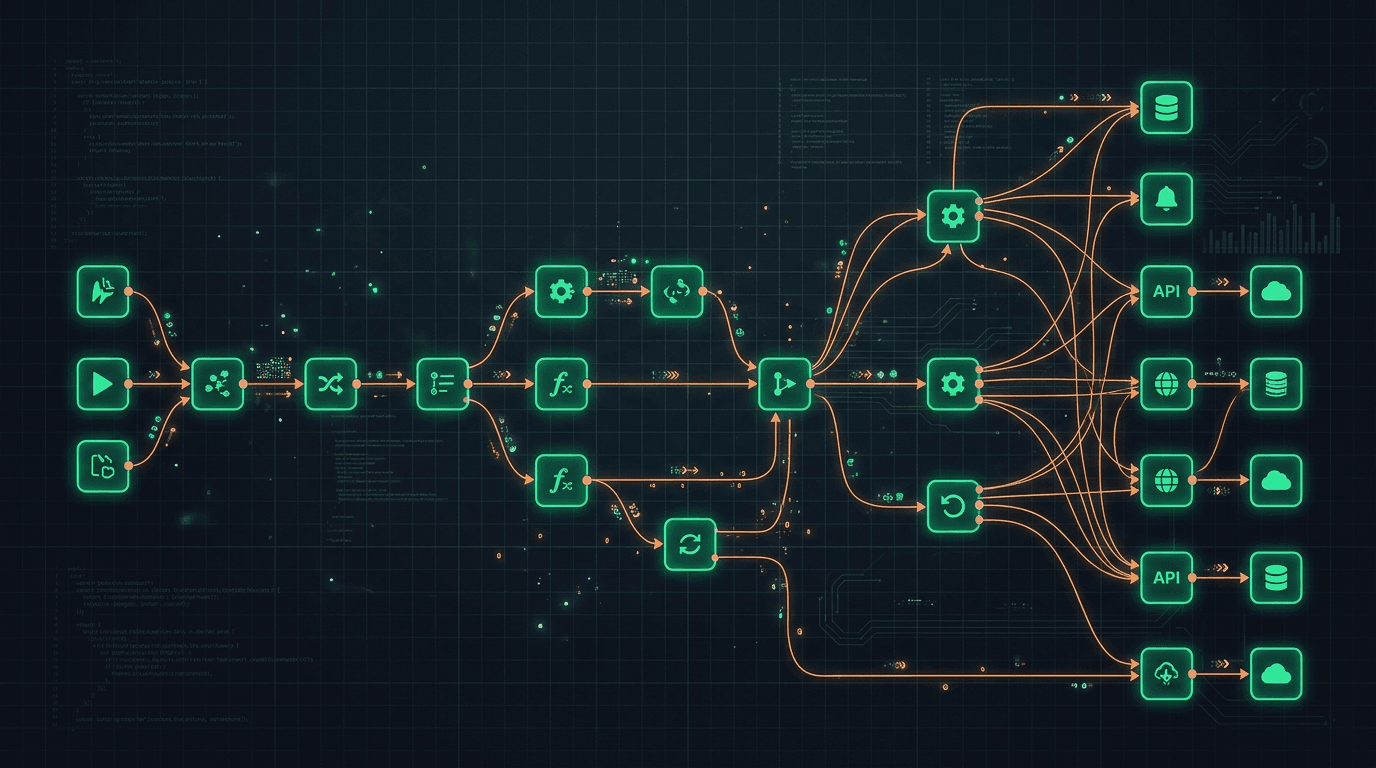

This article breaks down dynamic personalization patterns, why distinctive design matters in a sea of template-driven AI sites, how agentic commerce and assistant-friendly structure intersect, UX patterns that help both humans and LLM-based helpers, and metrics that go beyond raw click-through rate.

Dynamic Personalization Without Creepiness

Effective personalization starts with consent and clarity. Use declared intent first—industry, role, company size, use case—before inferring sensitive attributes. Layer behavioral hints carefully: repeat visits to pricing versus security pages, depth of scroll on case studies, and engagement with interactive demos.

Use AI to reorder modules, tune headlines, surface proof points, and shorten forms—not to hide material terms, shipping constraints, or pricing footnotes. Always ship a baseline experience that works when JavaScript is limited or personalization is blocked, and disclose material personalization in your privacy policy.

Implementation notes

Cache approved variants of hero and module copy after human review rather than generating unbounded text on every page view—latency, brand voice, and compliance all improve. Log which variant a user saw when they convert so you can attribute fairly.

The Anti-Bot Aesthetic: Standing Out in a Sea of AI Sameness

When everyone uses the same handful of visual tropes, distinctive art direction signals credibility: typography that fits your segment, photography of real customers, editorial layouts, and motion that supports comprehension rather than distraction. Invest in named case studies with metrics you can defend and update.

Models can generate generic landing pages in minutes; they cannot replicate your proof without lying. Lean into what is hard to copy: founder voice, community, proprietary data, and design systems that reflect brand values.

Agentic Commerce and Assisted Buying

Forward-looking sites expose structured offers, comparison tables, shipping and return rules in plain language, and FAQs that both humans and shopping agents can parse. Consider machine-friendly summaries adjacent to marketing prose: consistent SKU names, explicit compatibility statements, and JSON-LD where appropriate.

When agents initiate checkout or configuration flows, ensure idempotent APIs and clear confirmation steps so partial automation does not strand users.

UX for LLMs: Crawlable, Quotable, Honest

Assistants that run alongside users often fetch or summarize your site. Help them help buyers:

- Stable URLs and descriptive

<title>elements. - Clear H1/H2 hierarchy; do not bury critical facts only inside images or videos without transcripts.

- Last updated stamps on regulated or fast-changing claims.

- Accessible components so keyboard and screen-reader users get the same facts assistants extract from text.

Design Systems, Brand Guardrails, and AI Outputs

Personalization should not fracture your design system. Define token-level rules: which headings, spacing, and color contrasts AI-generated modules may use. Pre-approve layout patterns (testimonial bands, comparison tables, FAQ blocks) so generation chooses among safe components rather than inventing new CSS spaghetti.

Brand voice guidelines belong in a short, machine-readable style guide the model must follow—banned phrases, required disclaimers, and reading level targets. Human editors should spot-check weekly until metrics stabilize.

CRO Experiments with AI Variants

Run controlled experiments when testing AI-written headlines or module order: single change per cell, sufficient sample size, and guardrails that block variants which violate legal or accessibility checks. Prefer bandit approaches with automatic rollback if bounce rate spikes or error logs show client-side failures.

Document experiment results in a living playbook so future teams do not rerun failed ideas.

Measuring Success: From Clicks to Relationship Depth

Augment classic conversion metrics with:

- Assisted conversions and multi-touch paths that include content-heavy pages.

- Return visits and time-to-next meaningful action (signup, demo booked, doc read).

- Sales feedback on lead quality (“buyers arrived informed”).

- Support deflection after publishing clearer on-site answers.

AI-native UX should deepen conversation quality and trust, not only lift CTR on a single CTA.

Performance Budgets When AI Features Ship

Personalization and on-the-fly generation can bloat JavaScript and delay LCP. Set performance budgets per template: max KB of JS, max third-party calls, and server-side or edge-cached personalization where possible. Run Core Web Vitals checks on both cold and warm visits; AI-heavy pages often regress on INP if hydration fights main-thread work.

Lazy-load non-critical personalization bundles and prefetch likely next pages only when confidence is high. If an AI feature fails, fall back to static content instantly—never show a spinner for hero text.

Accessibility and Internationalization

Personalized layouts must not break keyboard navigation or screen reader order. Test dynamic inserts with automated a11y suites and manual passes. If you localize, avoid string concatenation that scrambles grammar—use full-sentence templates per locale.

Right-to-left considerations may matter for Arabic-heavy experiences; plan mirroring and font stacks early, not as a patch.

Ethics, Consent, and Dark-Pattern Boundaries

Avoid manipulative urgency generated by models (“only one seat left” when false). Disclose when copy is personalized if regulations in your markets require it. Children’s segments and regulated health claims deserve stricter gates—often no LLM copy at all without legal pre-approval.

Trust Signals in an AI-Heavy Funnel

Show who maintains the site, link to security and privacy pages from personalized modules, and offer a human escalation path when users feel stuck. AI-native does not mean faceless—buyers still choose vendors they believe will answer the phone after the contract is signed. A visible support SLA or response window on pricing pages often lifts conversion more than another hero animation.

Key Takeaways

- Lovable combines clarity, speed, trust, and measured adaptation—never gimmicks that sacrifice comprehension.

- Ground personalization in declared intent first; use behavior carefully and disclose where required.

- Distinctive art direction and real proof beat template aesthetics in an AI-saturated web.

- Structure pages so assistants can quote you accurately: headings, visible facts, updated dates.

- Instrument relationship-depth metrics, not only CTR spikes from sensational copy.

- Guard performance and accessibility as tightly as brand voice when shipping generative UI.

FAQ

Will AI personalization hurt SEO?

If you cloak materially different content to crawlers versus users, yes. Keep core content visible and consistent; use personalization for ordering and emphasis, not deception.

How much on-page AI is too much?

If LCP regresses or copy drifts off-brand, throttle generation and cache approved blocks.

Do we need a headless CMS?

Not mandatory, but structured content models make personalization and localization safer and faster.

What about accessibility?

WCAG-compliant components and reduced motion preferences still apply; test personalized layouts with the same bar as static pages.

Learn more on the AI Hub or discuss a redesign engagement via contact.